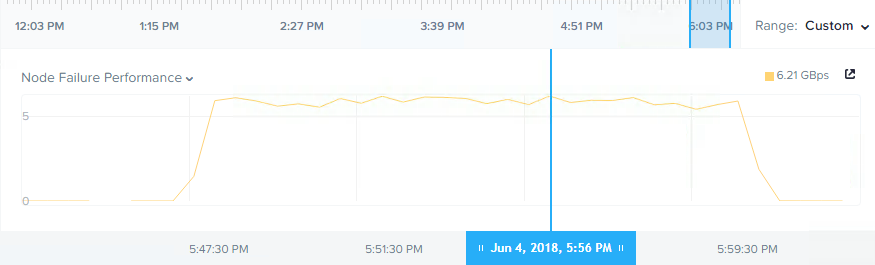

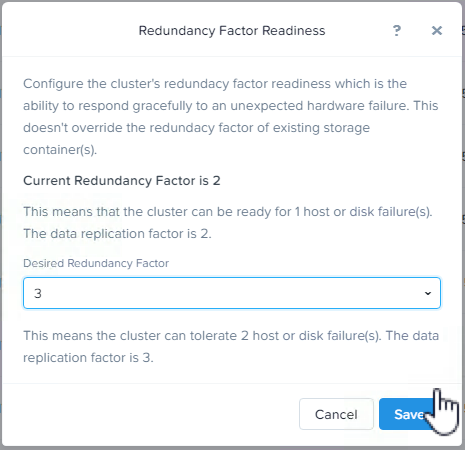

In the earlier parts of this series we’ve talked about how ADSF can recovery quickly from a node failure by re-protecting data in a distributed manner across the cluster. We also covered how resiliency can be increased from Resiliency Factor 2 (RF2) to RF3 and even changed to a more space efficient Erasure Coding (EC-X) configuration all without interruption.

Now let’s cover the critically important topic of how VMs are impacted during Nutanix Controller VM (CVM) maintenance such as AOS upgrades OR during failures such as the CVM crashing or even being accidentally or maliciously turned off.

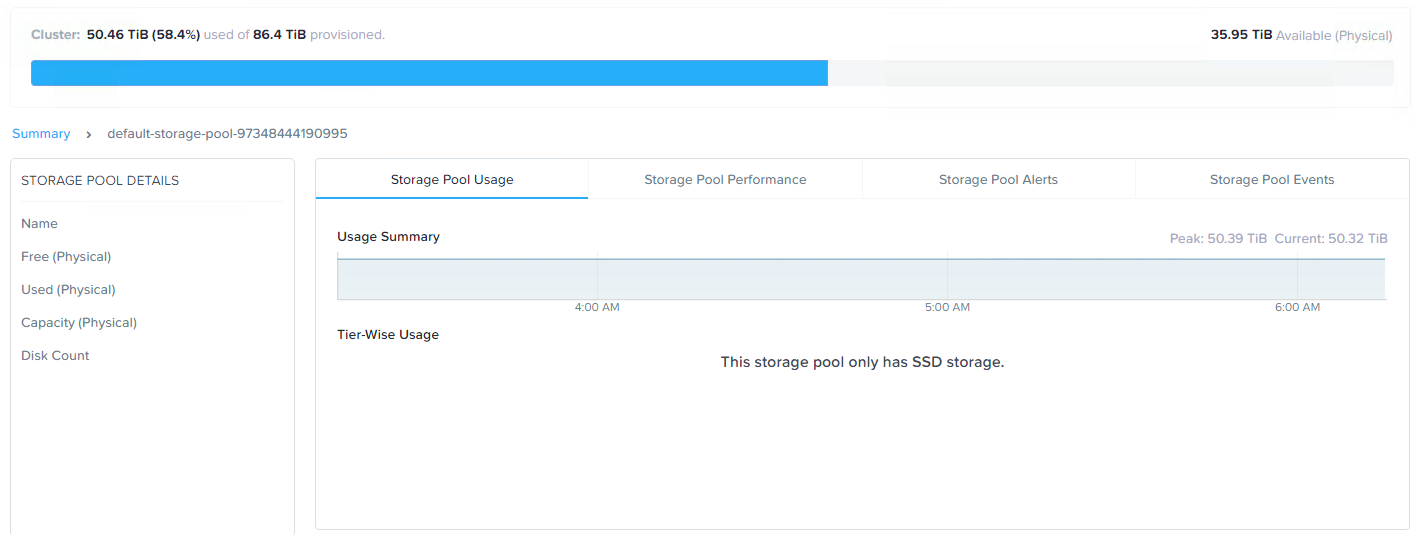

Let’s quickly cover the basics of how Nutanix ADSF writes and protects data.

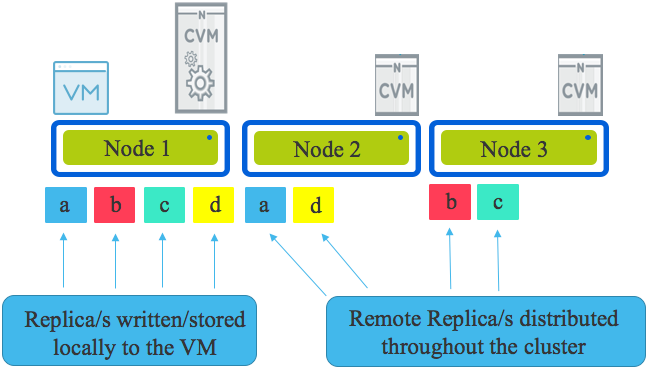

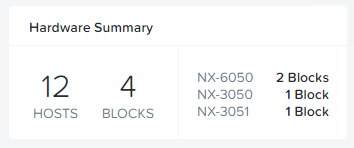

Looking an the following diagram we see a three node cluster with a single Virtual Machine. The VM has written some data represented by a,b,c & d & under normal circumstances all writes will have one replica written to the host running the VM (in this case Node 1) and the other replica (or replicas in the case of RF3) distributed throughput the cluster based on disk fitness values. The disk fitness values (or what I call “Intelligent replica placement”) ensure data is placed in the most optimal place the first time.

If one or more nodes are added to the cluster, the Intelligent replica placement will send proportionally more replicas to those nodes until the cluster is in a balanced state. In the very unlikely even no new writes are occurring, ADSF has a background disk balancing process which will balance the cluster as a low priority.

Now that we know the basics of how Nutanix protects data using multiple replicas (called “Resiliency Factor”) let’s talk about what happens during a Nutanix ADSF storage layer upgrade.

Upgrades are initiated by a one-click process and performed in rolling style one controller VM (CVM) at a time regardless of the configured Resiliency Factor and if Erasure Coding (EC-X) is used or not. The rolling upgrade put simply takes one CVM offline at a time, performs the upgrade, performs and self check and then rejoins the cluster and then repeats the process on the next CVM.

One of the many advantages of Nutanix decoupling the storage from the hypervisor (i.e.: not embedding storage into the kernel) is that upgrades and even storage layer failures do not impact the running Virtual machines.

VMs do not need to be restarted (i.e.: Like a HA event) nor do they need to migrate (e.g.: vMotion) to another node. VMs continue without interruption to storage traffic even when the local controller is offline for any reason.

If the local CVM is down for maintenance or due to failure, the I/O is dynamically re-directed throughout the cluster.

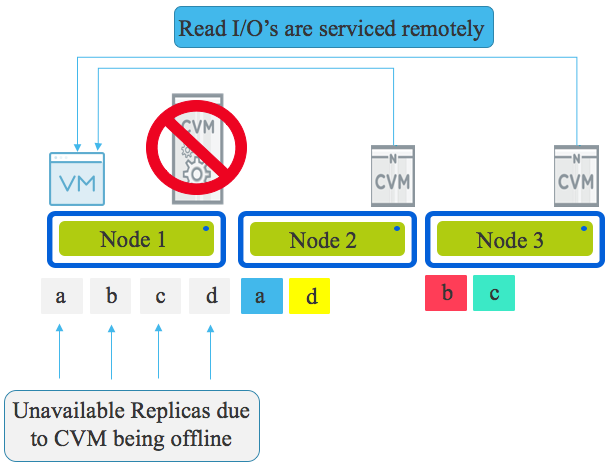

Let’s look at a Read I/O when the CVM local to a VM is offline (for any reason).

The local CVM being offline means the physical drives (NVMe, SSD, HDD etc) are not available meaning the local data (replicas) is unavailable.

All read I/O will be redirected and continue to function as it will now be served by all CVMs in the cluster.

This maintenance/failure scenario could be compared to a 3 Tier architecture in that the node running the VM is not currently providing storage and is connecting to the storage over a network. But as Nutanix is a distributed architecture all nodes within the cluster service the reads meaning in the worst case scenario of a three node cluster, during a failure or maintenance Nutanix has an equivalent architecture to an optimally performing dual controller storage array.

Let’s cover that one more time, in the WORST case scenario where the smallest cluster has suffered a failure (or maintenance) causing the read IO to be served remotely, Nutanix in a degraded state is at worst equivalent to a compute node accessing a dual controller storage array in it’s OPTIMAL state.

If the Nutanix cluster was for example eight nodes and one node was performing maintenance or the CVM was down for any reason, seven nodes would be serving IO to the VMs on that node. This process is actually nothing new and something Nutanix has done for a long time. It’s described in more detail in Acropolis Hypervisor (AHV) I/O Failover & Load Balancing which was published in July 2015.

Once the local CVM is back online, Read I/O is once again serviced by the local CVM and the only remote reads which occur will be in the case where a copy of data does not exist on the local node. When remote read/s occur, the 1MB extent which holds the data being read will be localised to allow subsequent reads to be local. It’s critical to understand the process of localising the extent (replica) adds no additional overhead on the network compared to a remote read so localising benefits performance without additional overheads.

Summary:

- ADSF writes data on the node where the VM resides to ensure subsequent reads are local.

- Read I/O is serviced by the local CVM and when the Local CVM is unavailable for any reason the read I/O is serviced by all CVMs in the cluster in a distributed manner

- Virtual machines do not need to be failed over or evacuated from a node when the local CVM is offline due to maintenance or failure

- In the worst case scenario of a 3 node cluster and a CVM down, a virtual machine running on Nutanix has it’s traffic serviced by at least two storage controllers which is the best case scenario for a Server + Dual Controller Storage Array (3 Tier) architecture.

- In clusters larger than three, Virtual machines on Nutanix enjoy more storage controllers serving their read I/O than an optimal scenario for a Server + Dual Controller Storage Array (3 Tier) architecture.

Index:

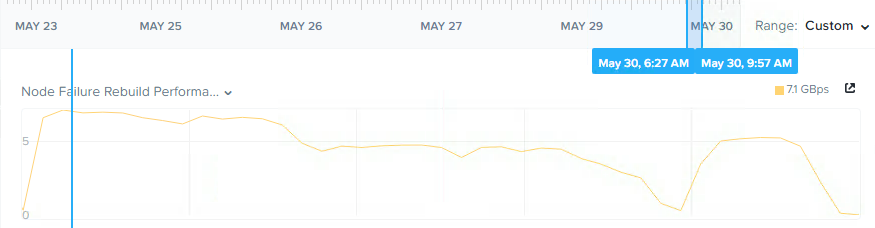

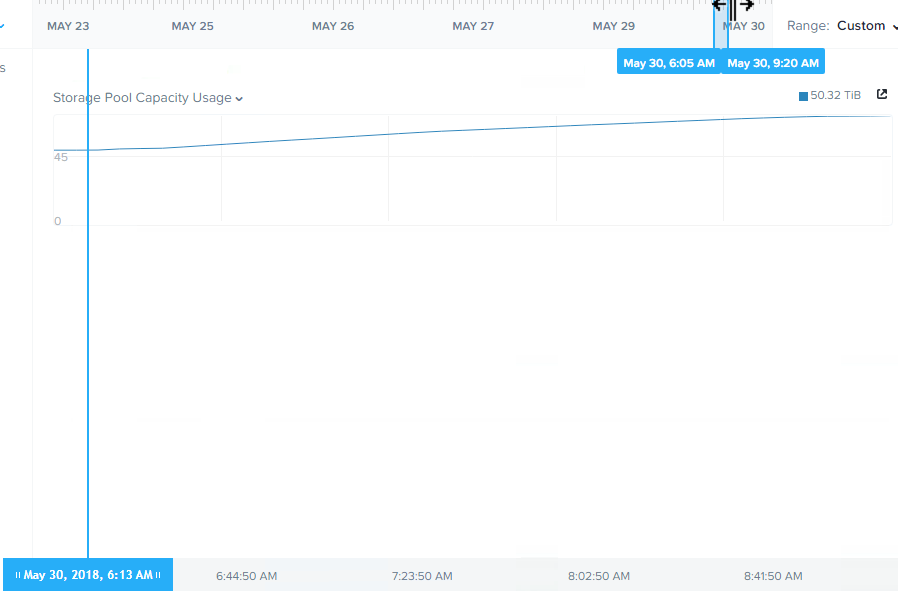

Part 1 – Node failure rebuild performance

Part 2 – Converting from RF2 to RF3

Part 3 – Node failure rebuild performance with RF3

Part 4 – Converting RF3 to Erasure Coding (EC-X)

Part 5 – Read I/O during CVM maintenance or failures

Part 6 – Write I/O during CVM maintenance or failures

Part 7 – Read & Write I/O during Hypervisor upgrades

Part 8 – Node failure rebuild performance with RF3 & Erasure Coding (EC-X)

Part 9 – Self healing

Part 10: Nutanix Resiliency – Part 10 – Disk Scrubbing / Checksums