At .NEXT 2015 Nutanix announced the Scale out File Server Tech Preview which was supported for AHV environments only. With the imminent release of AOS 4.7 the Scale out File Server has been renamed to Acropolis File Services (AFS) and will now be GA for AHV and ESXi.

AFS provides what I personally refer to as an “invisible” file server experience because it can be setup with just a few clicks in PRISM without the need to deploy operating systems.

AFS provides a highly available and distributed single namespace across 3 or more front end VMs which are automatically deployed and maintained by ADSF. The below shows a mixed cluster of 10 nodes made up of 8 x NX3060 and 2 x NX6035C nodes with the AFS UVMs spread across the cluster.

Data is then stored on the underlying Acropolis Distributed Storage Fabric (ADSF) in a Container which can be configured with your desired level of resiliency e.g.: RF2 or RF3 as well as data reduction features such as Compression, Deduplication and Erasure coding.

AFS inherits all of the resiliency that ADSF natively provides and supports operational tasks such as one-click rolling upgrades of AOS and hypervisor without impacting the availability of the file services.

Functionality

Backups

Nutanix will provide AFS with native support for local recovery points on the primary storage (cluster) and allow both Async-DR (60 mins) and Sync-DR (0 RPO) to allow data to be backed up to remote cluster.

For customers who employ 3rd party backup tools, AFS can also be simply backed up as an SMB share which is a common capability amongst backup vendors such as Commvault and Netbackup.

The below shows a high level of what a 3rd party backup solution looks like with AFS.

Quotas

AFS also allows administrators to set quotas to help with capacity management especially in environments with multi-tenant or departmental deployments to avoid users monopolising capacity in the environment.

Patching/Upgrades

Acropolis File Server can be upgraded and patched separately to AOS and the underlying hypervisor. This ensures that the version of AFS is not dependant on the AOS or hypervisor versions which also makes QA easier and minimizes the chance of bugs since the AFS layer is abstracted from the AOS and hypervisor.

This is similar to how the AOS version is not dependant on a hypervisor version, ensuring maximum flexibility and stability for customers. This means as new features/improvements are added, AFS can be upgraded via PRISM without worrying about interoperability and dependancies.

Patches and upgrades are one-click, rolling, non-disruptive upgrades the same as AOS.

Scaling

As the file serving workload increases, Acropolis File Server can be scaled out by simply adding instances to balance the workload across. If the Nutanix cluster has more nodes than AFS instances, this can be done quickly and easily through prism.

If the cluster has for example 4 nodes and 4 AFS instances are already deployed, then to scale the performance of the AFS environment the UVMs vCPU/vRAM can be scaled up OR additional nodes can be added to the cluster and AFS instances scaled out.

When one or more additional AFS instances (UVM) are added, the workload is automatically balanced across all UVMs in the environment. ADSF will also automatically balance the new and existing file server data across the ADSF cluster to ensure even capacity utilization across nodes as well as consistent performance and linear scaling.

So in short, AFS provides both scale up and scale out options.

Interoperability with Storage Only nodes

Acropolis File Server is fully supported on environments using storage only nodes. As the storage nodes provide a Nutanix CVM and underlying storage to ADSF, the available capacity and performance is made available to AFS just like it is to any other VM. The only requirement is 3 or more Compute+Storage nodes in a cluster to support the minimum 3 AFS UVMs.

AFS deployment examples

Acropolis File Services can be deployed on existing Nutanix clusters which allows file data to be co-located on the same storage pool with existing data from virtual machines as well as with physical or virtual servers utilising Acropolis Block Services (ABS).

Acropolis File Services can be deployed on dedicated clusters such as storage heavy and storage only nodes for environments which do not have virtual machines, or for very large environments while be centrally managed along with other Nutanix clusters via PRISM Central.

Multi-tenancy

AFS also allows multiple seperate instances to be deployed in the same Nutanix cluster to service different security zones, tenants or use cases. The following shows an example of a 4 node Nutanix cluster with two instances of AFS. The first has 4 AFS instances (UVMs) and the second has just 3 instances. Each instance can have different data reduction (Compression, Dedupe,EC-X) settings and be scaled independently.

Summary:

- AFS supports multiple hypervisors and is deployed in mins from PRISM

- Can be scaled both up and out to support more users, capacity and/or performance

- Interoperable with all OEMs and node types including storage only

- Supports non-disruptive one-click rolling upgrades

- Supports multiple AFS instances on the one cluster for multi-tenancy and security zone support

- Has native local recovery point support as well as remote backup (Sync and Async) support

- All data is protected by the underlying ADSF

- Supports all ADSF data reduction technologies including Compression, Dedupe and Erasure Coding.

- Eliminates the requirement for a silo for File sharing

- Capacity available to AFS is automatically expanded as nodes are added to the cluster.

Related .NEXT 2016 Posts

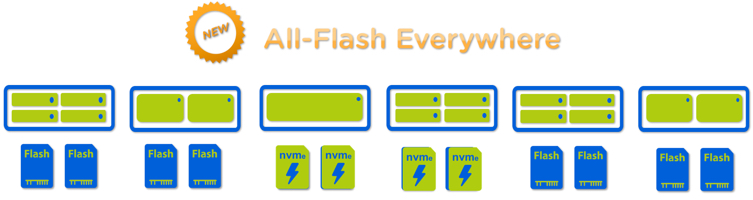

- What’s .NEXT 2016 – All Flash Everywhere!

- What’s .NEXT 2016 – Acropolis File Services

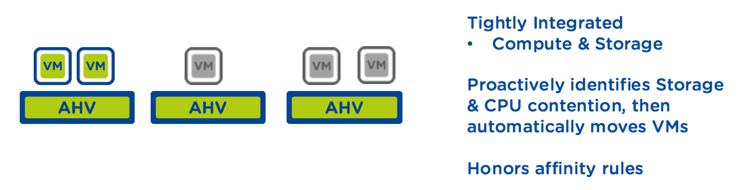

- What’s .NEXT 2016 – Acropolis X-Fit

- What’s .NEXT 2016 – Any node can be storage only

- What’s .NEXT 2016 – Metro Availability Witness

- What’s .NEXT 2016 – PRISM integrated Network configuration for AHV

- What’s .NEXT 2016 – Enhanced & Adaptive Compression

- What’s .NEXT 2016 – Acropolis Block Services

- What’s .NEXT 2016 – Self Service Restore