Help! My performance is terrible and my consultant/vendor says it’s due to high/excessive CPU overcommitment! What do I do next?

Question: “How much CPU overcommitment is ok?”.

The answer is of course “It depends” and there are many factors including but not limited to, workload type, physical CPUs and how complimentary the workloads (other VMs) are.

Other common questions include:

“How much overcommitment do I have now?”

&

“How do I know if overcommitment is causing a performance problem?”.

Let’s start with “How much overcommitment do I have now?”.

With Nutanix this is very easy to work out, first goto the Hardware page in PRISM and click Diagram, then select one of your nodes as shown below.

Once you’ve done that you will see below in the “Summary” section the CPU Model, No. of CPU Cores and No. of Sockets as shown below.

In this case we have 2 sockets and 20 cores total for a total of 10 physical cores per socket.

If you have multiple node types in your cluster, repeat this step for each different node type in your cluster. Then simply add up the total number of physical cores in the cluster.

In my example, I have three nodes, each with 20 cores for a total of 60 physical cores.

Next we need to find out how many vCPUs we’ve provisioned in the cluster. This can be found on the “VMs” page in PRISM as shown below.

So we have our 3 node cluster with 60 physical cores (pCores) and we have provisioned 130 vCPUs.

Now we can input the details into my vSphere Cluster Sizing Calculator and work out the overcommitment including our desired availability level (in my case, N+1) and we get the following:

The calculator is designed to be conservative and show information assuming the resources (CPU/RAM) required for the configured availability level are removed from the calculation. Put simply, the vCPU:pCore ratio assumes the N+1 host is not in the cluster which is how I personally size environments, especially for business critical applications.

The calculator shows us we have a 3.25:1 vCPU:pCore ratio.

For business critical applications like SQL, Exchange, Oracle, SAP etc, I always recommend sizing without CPU overcommitment (so <= 1:1) and ensuring the VMs are right sized to avoid poor performance and wasted resources.

Now that we know our overcommitment ratio, what’s next?

We need to find out if our overcommitment level is consistent with our original design and assess how the Virtual Machines are performing in the current state. A good design should call out the application requirements and critical performance factors such as CPU overcommitment and VM placement (e.g.: DRS Rules).

“How do I know if overcommitment is causing a performance problem?”.

One of the best ways to measure if a VM has CPU scheduling contention is by looking at “CPU Ready” or “Stolen time” in the AHV (or KVM) world.

CPU ready is basically the delay between time when the VM requests to be scheduled onto CPU cores and the time when it’s actually scheduled. One of the easiest way’s to present this is in a percentage of total time that the VM is waiting to be scheduled.

How Much CPU Ready is OK? My rule of thumb is:

<2.5% CPU Ready

Generally No Problem.

2.5%-5% CPU Ready

Minimal contention that should be monitored during peak times

5%-10% CPU Ready

Significant Contention that should be investigated & addressed

>10% CPU Ready

Serious Contention to be investigated & addressed ASAP!

With that said, the impact of CPU Ready will vary depending on your application so even 1% should not be ignored especially for business critical applications.

As CPU Ready is a critical performance metric, Nutanix decided to display this in PRISM on a per VM basis so customers can easily identify CPU scheduling contention.

Below we see the summary of a VMs performance which can be found on the VM’s page in PRISM after highlighting a VM. At the bottom of the page we see a graph showing CPU Ready.

CPU Ready of <2.5% is unlikely to be causing major issues for the majority of VMs, but in some latency sensitive applications like databases or video/voice, 2.5% could be causing noticeable issues so never disregard looking into CPU ready in your troubleshooting.

I recommend looking at a VM and if it’s showing even minimal CPU ready is say >1% and it’s a business critical application, follow the troubleshooting steps in this article until CPU Ready is <0.5% and measure the performance difference.

Key Point: If you have applications like SQL Always on availability groups, Oracle RAC or Exchange DAGs, one VM suffering CPU Ready will likely be having a flow on impact to the other VMs trying to communicate (or replicate) to it. So ensure all “dependancies” for your VM/app are not suffering CPU Ready before looking into other areas.

In short, Server A with no CPU Ready can be impacted when trying to communicate to Server B and being delayed because Server B has High CPU Ready.

The reason I bring this up is because it’s important not to get tunnel vision when looking at performance problems.

Now to the fun part, Troubleshooting/Resolutions to CPU Ready!

- Right size your VMs

Do NOT ignore this step! Your CPU overcommitment ratio is irrelevant, Right Sizing will always improve the efficiency and performance of your VMs. There is an increasing overhead at the hypervisor layer for scheduling more vCPUs, even with no overcommitment so ensure VMs are not oversized.

A common misconception is that 90% CPU utilisation is a bottleneck, in fact this can be a sign of a right sized VM. We need to ensure vCPUs are sized for peaks but unless a VM is pinned at 100% CPU for long periods of time, a short spike to 100% is not necessarily a problem.

Here is an example of the benefits of VM right sizing.

Once you have right sized your VMs, move onto step 2.

2. Size or place VMs within NUMA boundaries

First what is a NUMA boundary? It’s pretty simple, take the number of cores and divide by the number of sockets and that’s the NUMA boundary and also the maximum number of vCPUs a VM can be if you wish to benefit from maximum memory performance and optimal CPU scheduling.

The total host RAM is also a factor so divide the total RAM by the number of sockets and that’s the maximum RAM a VM can be assigned without breaching the NUMA boundary and paying an approx 30% performance penalty on memory performance.

Example: I had a customer who had MS Exchange running with 12vCPU / 96GB VMs on Nutanix nodes with 12 cores per socket. Exchange was running poorly (in the end due to a MS bug) but they insisted the problem was insufficient CPU. So they forced the customer to increase the VM to 18 vCPUs.

This did not solve the performance problem AND in fact made performance worse as the VM now suffered from very higher CPU Ready as VMs larger than a NUMA boundary can experience much higher CPU ready especially on hosts running other workloads. Moving back to 12 vCPUs relieved the CPU Ready and then Microsoft ultimately resolved the case with a patch.

3. Migrate other VMs off the host running the most critical VM

This is a really easy step to alleviate CPU scheduling contention and allows you to monitor the performance benefit of not having CPU overcommitment.

If the virtual machines performance improves you’ve likely found at least one of the causes of the performance problem. Now comes the harder part. Unless you can afford to have a single VM per host, you now need to identify complimentary workloads to migrate back onto the host.

What’s a complimentary workload?

I’m glad you asked! Let me give you an example.

Let’s say we have a 10vCPU / 128GB RAM SQL Server VM which is right sized (of course) and our host is the NX-8035-G4 with 2 sockets of 10 cores per socket (20 cores total) and 256GB RAM. Being SQL we’ll also assume it has high IO requirements as it’s the backend for a business critical application.

Being Nutanix we also have a Controller VM using some resources (say 8vCPUs and 32GB RAM). For those who are interested see: Cost vs Reward for the Nutanix Controller VM (CVM)

A complimentary workload would have one or more of the following qualities:

a) Less than 96GB RAM (Host RAM 256GB, minus SQL VM 128GB, minus CVM 32GB = 96GB remaining)

b) vCPU requirements <= 2 (This would mean a 1:1 vCPU:pCore ratio)

c) Low vCPU requirements and/or utilization

d) Low IO requirements

e) Low capacity requirements (this would maximise the amount of SQL data which would remain local to the node for maximum read performance with data locality).

f) A workload which uses CPU/Storage at a different time of the day to the SQL workload.

e.g.: SQL might be busy 8am to 6pm, but workload may drop significantly outside those hours. A VM with high CPU/Storage IO requirements that runs from 7pm to midnight would potentially be a very complimentary workload as it would allow higher overcommitment and with minimal/no performance impact due to the hours of operation of the VMs not overlapping.

4. Migrate the VM onto a node with more physical cores

This might be an obvious one but a node with more physical cores has more CPU scheduling flexibility which can help reduce CPU Ready. Even without increasing the vCPUs on the VM, the VM has a better chance of getting time on the physical cores and therefore should perform better.

5. Migrate the VM onto a node with a higher CPU clock-rate

Another somewhat obvious one but it’s very common for vendors and customers to quote the number of vCPUs as a requirement when a “vCPU” is not a unit of measurement. A vCPU at best with no overcommitment is equal to one physical core and it goes downhill for there. Physical cores also vary in clock-rate (duh!) so a faster clock rate can have a huge impact on performance especially for those pesky single threaded applications.

Note: CPUs with higher clock rates typically have fewer cores, so don’t make the mistake of moving a VM to a node where it exceeds the NUMA boundary!

6. Turn OFF advanced power management on the physical server & use “High Performance” as your policy (in ESXi)

Advanced Power Management settings can save power and in some cases have minimal impact on performance, but when troubleshooting performance problems, especially around business critical applications, I recommend eliminating Power Management as a potential cause and once the performance problem is resolved, test re-enabling it if you desire.

7. Enable Hyperthreading (HT)

Hyperthreads can provide significant CPU scheduling advantages and in many cases improve performance despite a hyperthreading providing generally fairly low overall performance (typically 10%-30%) in CPU benchmarks.

Long story short, a VM in a Ready state is doing NOTHING, so enabling HT can allow it to be doing SOMETHING, which is better than NOTHING!

Also hypervisors are pretty smart, they preferentially schedule vCPUs to pCores so the busy VMs will more often than not be on pCores while the VMs with low vCPU requirements can be scheduled to hyperthreads. Win/Win.

Note: Some vendors recommend turning HT off, such as Microsoft for Exchange. But, this recommendation is really only applicable to Exchange running on physical servers. For virtualization always, always leave HT enabled and size workloads like Exchange with 1:1 vCPU to pCore ratios, then you will achieve consistent, high performance.

For anyone struggling with a vendor (like Microsoft) who is insisting on disabling HT when running business critical apps, here is an Example Architectural Decision on Hyperthreading which may help you.

Example Architectural Decision – Hyperthreading with Business Critical Applications (Exchange 2013)

8. Add additional nodes to the cluster

If you have right sized, migrated VMs to nodes with complimentary workloads, ensured optimal NUMA configurations, ensured critical VMs are running on the highest clock-rate CPUs etc and you’re still having performance problems, it may be time to bite the bullet and add one or more nodes to the cluster.

Additional nodes provide more CPU cores and therefore more CPU scheduling opportunities.

A common question I get is “Why can’t I just use CPU reservations on my critical VMs to guarantee them 100% of their CPU?”

In short, using CPU reservations does not solve CPU ready, I have also written an article on this topic – Common Mistake – Using CPU reservations to solve CPU ready

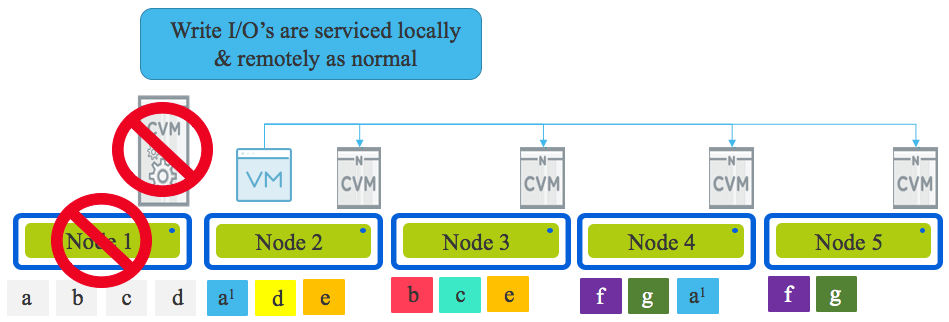

Wildcard: Add storage only nodes

Wait, what? Why would adding storage only nodes help with CPU contention?

It’s actually pretty simple, lower latency for read/write IO means less CPU WAIT which is the time the CPU is “waiting” for an IO to complete.

e.g.: If an I/O takes 1ms on Nutanix but 5ms on a traditional SAN, then moving the VM to Nutanix will mean 4ms less CPU WAIT for the VM, which means the VM can use it’s assigned vCPUs more efficiently.

Adding storage only nodes (even where the additional capacity is not required) will improve the average read/write latency in the cluster allowing VMs to be scheduled onto a physical core, get the work done, and release the pCore for another VM or to perform other work.

Note: Storage only nodes and the way data is distributed throughput the cluster is a unique capability for Nutanix. See the following article for an example on how performance is improved with storage only nodes with NO modification required to the VMs/Apps.

Scale out performance testing with Nutanix Storage Only Nodes

Summary:

There are a lost of things we can do to address CPU Ready issues, including thinking outside the box and enhancing the underlying storage with things like storage only nodes.

Other articles on CPU Ready

1. VM Right Sizing – An Example of the benefits

3. Common Mistake – Using CPU Reservations to solve CPU Ready